Back in 2018, Mudbath Digital were approached to build a new content site for a client. Having worked with JAM stack architecture for a number of our larger clients it was decided to try and roll this out to a smaller customer. Deploying this solution to AWS raised a number of technical problems which we had not come across before when deploying traditional CMS sites. Through this article, I will run through a number of issues and complications that we came across.

Jamstack

While not intending to make this article an in-depth dive into Jamstack, I will cover the architecture briefly.

Taking a step back it would be best to start by describing what is involved with Jamstack architecture. To the uninitiated, JAM stack stands for Javascript, APIs and Markup. I however, find this description wholly unhelpful in describing exactly what this architecture does. Jamstack is loosely defined and can have different meanings and limitations depending on the developer or project.

Frequently, JamStack is used to build a public facing content site. The most common components used are:

- Headless CMS - A platform used to create and update content

- Static Site Generator - A framework to generate static HTML pages

- CDN - A platform to distribute assets across the web.

Content is authored within a Headless CMS, which in turn is used to generate a website with the Static Site Generator. This website is then hosted on a CDN.

This approach has a number of advantages:

- Security - No public exposed servers to compromise

- Cheap Hosting - CDNs are significantly cheaper than servers

- Lower Maintenance - No servers to patch or maintain

- Easy rollback - Can revert to a previous build of a site

AWS Infrastructure

After a period of investigation, we narrowed down the number of recommended services to use to host a JamStack site in AWS.

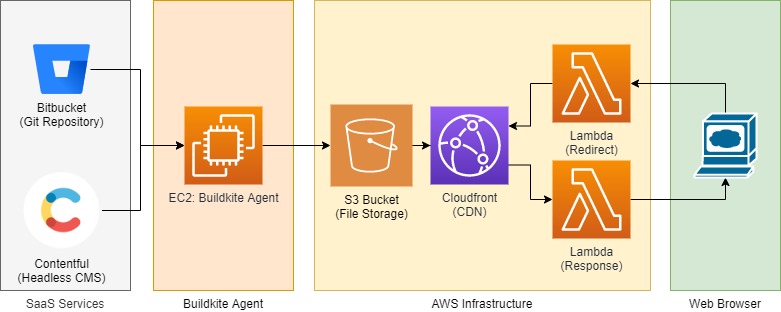

Content managed in Contentful is used to generate a static site with Buildkite, with source control managed in Bitbucket. The artifact generated by Buildkite is then pushed to a S3 Bucket, which in turn is used as a source for the Cloudfront CDN. And if that wasn’t enough infrastructure for you, then there are the lambdas....

Why are there Lambdas?

Lambda Edge provides the ability to intercept and modify these HTTP requests and responses.

Why do we want to modify these requests and responses?

To Redirect a User

- 301 Redirect for content which has been moved

- Managing trailing slashes redirects

- APEX site to WWW root redirects

To Set Security Headers for assets

- Content-Security-Policy

- X-frame-options

- x-xss-protection and others

The Grievances

The infrastructure required to host what was meant to be a simple public facing site evolved into a large sprawling ecosystem of AWS services. This architecture was meant to reduce complexity and maintenance but has had the opposite effect.

Build and Hosting Cost

Running buildkite agents can get expensive. Not only do you need to pay for the EC2 instance to run said agents on, but you also need to pay the licencing costs for each developer who needs access to the build pipeline. And every developer and their dog will want access to the pipeline when working on a project. Rapidly increasing the overall cost of the system to run.

Complexity

By the time we had finished standing up the site, we had seven separate pieces of AWS infrastructure. Most egregious of all:

- A EC2 buildkite agent which will need regular patching and maintaining

- A bespoke deployment process

- Two Lambdas which will need their own deployment pipeline

- Then repeat all the above for each environment: Dev, QA, UAT, Production...

Deployments Process

When rolling our own deployment process we push artifacts directly to S3 which results in old assets not being cleaned up but are left behind. This was a limitation of our deployment approach as we did not want to delete the site when deploying as that would have resulted in small outages on each deployments.

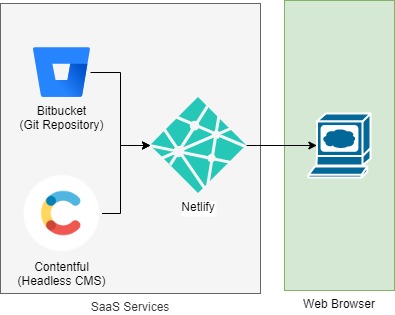

Netlify Infrastructure

Now, instead of building and managing our own infrastructure, let us instead move to the managed service Netlify. Suddenly there is so much less infrastructure, so fewer moving pieces to keep track of. Just one service to rule them all. Now, Netlify isn’t going to fit every use case and I will go into further detail below. But running through my original grievances.

Build and Hosting Cost

Hosting is now just $20 USD a developer. Now, this price is equivalent to what you would pay for Buildkite but instead of just getting a build you also get an integrated hosting service.

Complexity

As stated above, we have gone from 7 separate services down to 3. Significantly reducing onboarding effort and infrastructure maintenance.

Atomic Deployments

Deployments are now atomic, with the production site being cut over immediately with no artifacts from previous deployments. Not only that, we now also have the ability to easily rollback back to previous builds.

Feature Branches

Bonus!!! We now have feature branches. When configured, everytime you push a branch or create a PR you can now have a whole separate environment stand itself up automatically. Allowing you to test features in isolation rather than having a dedicated Dev or QA environment which encourages big bang releases.

Limitations

There are a number of limitations when working with Netlify. There is not an insignificant cost per developer once you start to scale up. But keeping in mind you're not having to pay for a buildkite any longer which has a per developer pricing model. If you require to restrict access to your test sites then you will need to buy the Pro Plan for the password protection feature at an additional cost.

Conclusion

Now to be fair AWS offers a lot more than just static site hosting. They provide a fully customizable cloud environment to build your IT infrastructure on top of. Netlify provides a solution to a very particular problem with a well managed SaaS product. Sometimes running your own bespoke infrastructure is acceptable if you have already invested heavily with a cloud provider.

However, I would recommend the use of platforms like Netlify over managing your own infrastructure as they provide many cost saving features. Especially if you're not big into running and maintaining your own infrastructure.

Caveat

There are alternative platforms available, such as AWS Amplify, which will perform a similar role that I will get into in another post. Azure has a static site hosting platform currently in preview at the moment for those working with that cloud provider. This article was primarily used to compare managing your own infrastructure vs one of the more popular managed platforms on the market.